AI in medical diagnosis integrates imaging, genomics, and clinical data to detect disease patterns with transparent provenance. Systems emphasize interpretability, validation across diverse cohorts, and prospective performance in real-world workflows. They offer early detection, differential weighting, and decision-support tools, while governance addresses privacy, fairness, and external audits. Yet questions remain about safety, accountability, and reproducibility across settings, prompting ongoing scrutiny of standards and regulatory oversight to ensure robust, patient-centered care.

How AI Transforms Diagnosis: Foundations and Capabilities

AI-based diagnostic systems integrate data from diverse sources—imaging, genomics, clinical records, and continuously updated literature—to identify patterns linked to disease states. They harness data provenance to trace origins and transformations, ensuring reproducibility. Models emphasize interpretability, presenting rationale for findings. Foundations include probabilistic reasoning, validation across cohorts, and transparent methodology. Capabilities encompass early detection, differential weighting, and integration into informed clinical decision-making.

Ensuring Trust: Accuracy, Fairness, and Privacy in AI Tools

Ensuring trust in AI tools for medical diagnosis requires rigorous attention to accuracy, fairness, and privacy across all stages of development and deployment. Data governance structures ensure accountable data handling, provenance, and consent, while model interpretability clarifies decision pathways.

Continuous validation, bias assessment, and external auditing reinforce reliability, enabling clinicians and patients to evaluate risk without compromising safety or autonomy.

From Imaging to Risk Prediction: Real-World Clinical Applications

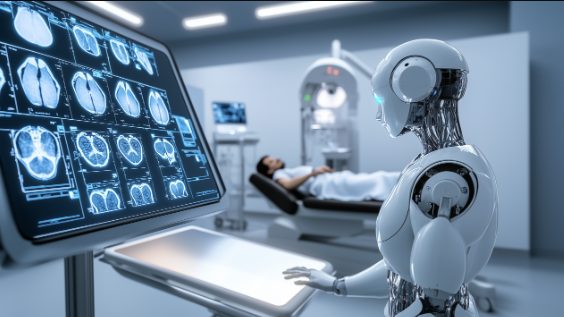

From imaging data to risk prediction, real-world clinical applications showcase how diagnostic radiology, genomics, and wearable-derived signals integrate to stratify patient risk and guide management decisions.

In practical settings, imaging workflows streamline data capture, annotation, and integration with clinical models, producing actionable risk prediction outputs that support personalized care while maintaining rigorous validation, transparency, and reproducibility across diverse populations.

Navigating Validation and Regulation: Oversight, Standards, and Transparency

Navigating validation and regulation requires a disciplined examination of oversight mechanisms, methodological standards, and the disclosure of performance metrics across diverse populations.

The discourse identifies validation pathways and regulatory hurdles, emphasizing transparency standards and data governance as core elements.

Rigorous evaluation uncovers biases, ensures reproducibility, and clarifies accountability, enabling evidence-based adoption while preserving clinician autonomy, patient safety, and ethical responsibility in AI-enabled diagnosis.

Frequently Asked Questions

How Is Patient Consent Handled for Ai-Assisted Diagnoses?

Consent governance governs AI-assisted diagnoses, ensuring informed, voluntary participation; patient privacy is protected through data minimization, de-identification, and robust access controls. Researchers and clinicians document consent scopes, data usage, and withdrawal options, enabling transparent, rights-respecting participation for patients seeking autonomy.

Can AI Replace Clinicians in Medical Decision-Making?

AI autonomy cannot fully replace clinicians in medical decision-making; it supports decisions, but true expertise and accountability remain with human practitioners, emphasizing clinician collaboration, oversight, and patient-centered care within rigorous, evidence-based, freedom-oriented medical practice.

What Happens if AI Misdiagnoses a Patient?

In the event of misdiagnosis, AI-assisted decisions may need correction; consequences include patient harm, remedial care, and potential medico legal liability. The analysis emphasizes rigorous verification, accountability, and transparent disclosure to support evidence-based, patient-centered decisions.

See also: AI in Logistics

How Do AI Tools Learn From Biased or Incomplete Data?

Allusion to unseen folklore haunts imperfect learning: AI tools learn from biased or incomplete data via biased data pitfalls, careful scrutiny, and data quality strategies. They require transparent datasets, validation, bias mitigation, and rigorous, evidence-based evaluation for freedom and trust.

What Are Cost and Accessibility Implications for AI Diagnostics?

Cost efficiency hinges on scalable infrastructure and maintenance, while deployment barriers include clinician training, data integration, and regulatory compliance; overall, accessibility hinges on funding, equitable access, and robust validation to balance innovation with patient safety.

Conclusion

AI-driven diagnosis stands on a foundation of multimodal data integration, rigorous validation, and transparent provenance. Evidence demonstrates improved early detection, differential weighting, and decision support across diverse populations. Upholding accuracy, fairness, and privacy requires robust governance, external audits, and standards-driven methodologies. Real-world workflows must balance efficiency with patient autonomy. While challenges remain, adherence to reproducibility and regulatory rigor shows promise for safer, more equitable care—plotting a steady course toward better outcomes, without shouting, steady as she goes.